In my last piece, I wrote about Scott Brinker's composable canvas and the distance most organizations still have to travel before they can operate that way. I ended with the observation that data gravity and organizational distance are two forces that haven't quite caught up with each other yet, and that the gap between them isn't a problem to solve in one go, it's something to navigate.

That question, how far are we from being able to operate this way, has kept coming up in conversations since. So this piece is an attempt to name what's actually sitting inside that distance. Because I don't think it's mainly technical. And I don't think it's mainly about architecture.

Tell me if the following sounds familiar...

A company has invested seriously in its stack. The tools are credible, sometimes genuinely sophisticated. The team is capable. Leadership is engaged. And yet something keeps not working. Programs stall, and platforms underdeliver. The same conversations happen in different meetings. A new vendor gets brought in, things improve for a quarter, and then the same friction returns wearing slightly different clothes.

What's actually happening is that the organization has moved faster than it was ready to. Not recklessly, not stupidly. In many cases, entirely reasonably given the pressure it was under. The market kept offering new answers, AI, composable architectures, warehouse-native platforms, real-time decisioning, and the gap between what was possible and what competitors might be doing with it created a specific kind of anxiety. So decisions got made. Tools got bought, and transformations got launched.

That distance between what an organization believes it's ready for and what it can actually sustain in practice is what I've started calling the Martech Readiness Gap. It's not a new problem. But it's getting wider, faster, and more expensive to ignore.

Why Martech's Law makes this worse

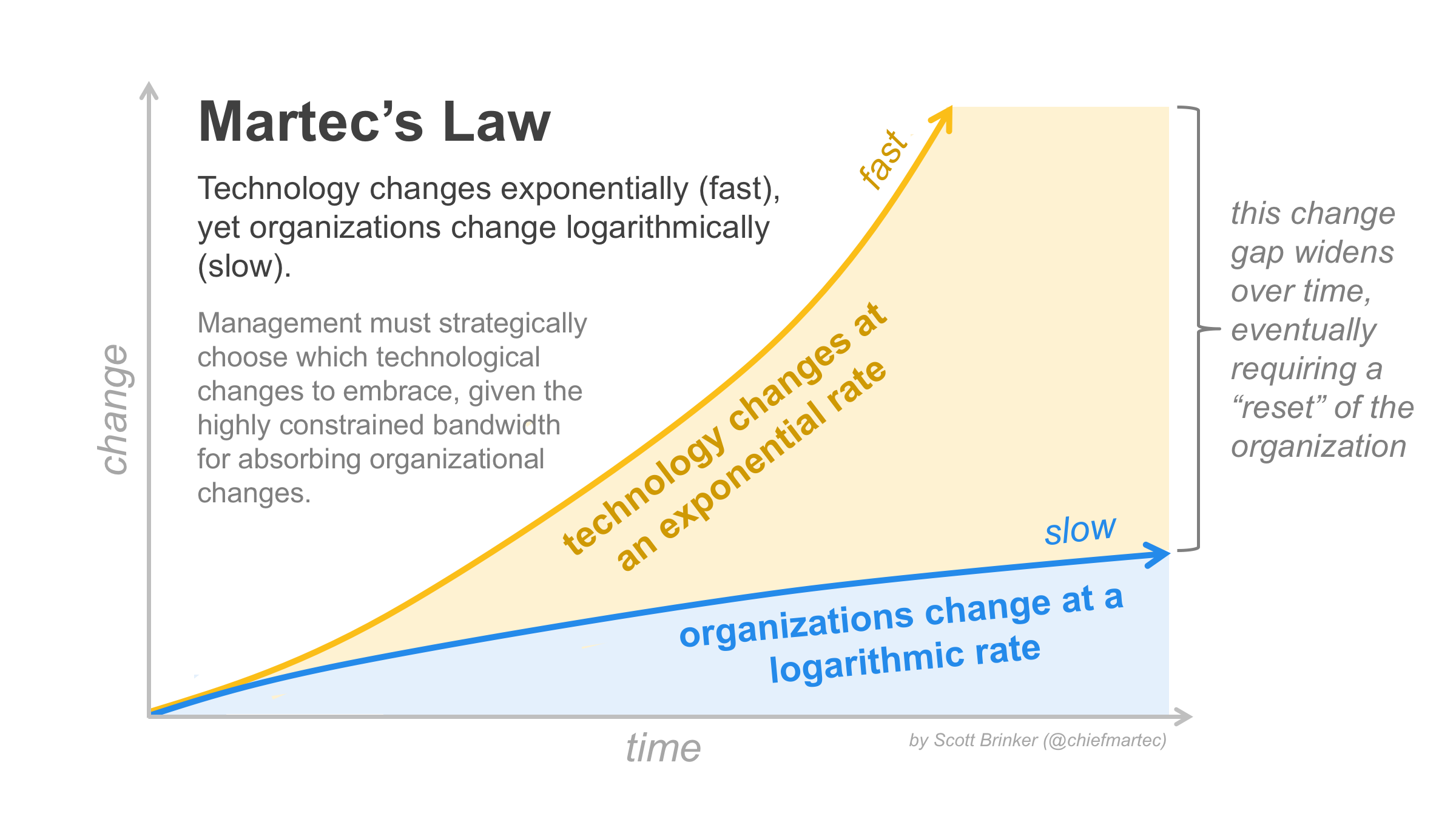

If you've been following my writing for a while, you'll know I keep coming back to Martech's Law. Scott Brinker's observation that technology changes faster than organizations can absorb it was originally offered as a comment on the pace of the vendor landscape. What it describes in practice is the structural condition that produces the readiness gap in the first place.

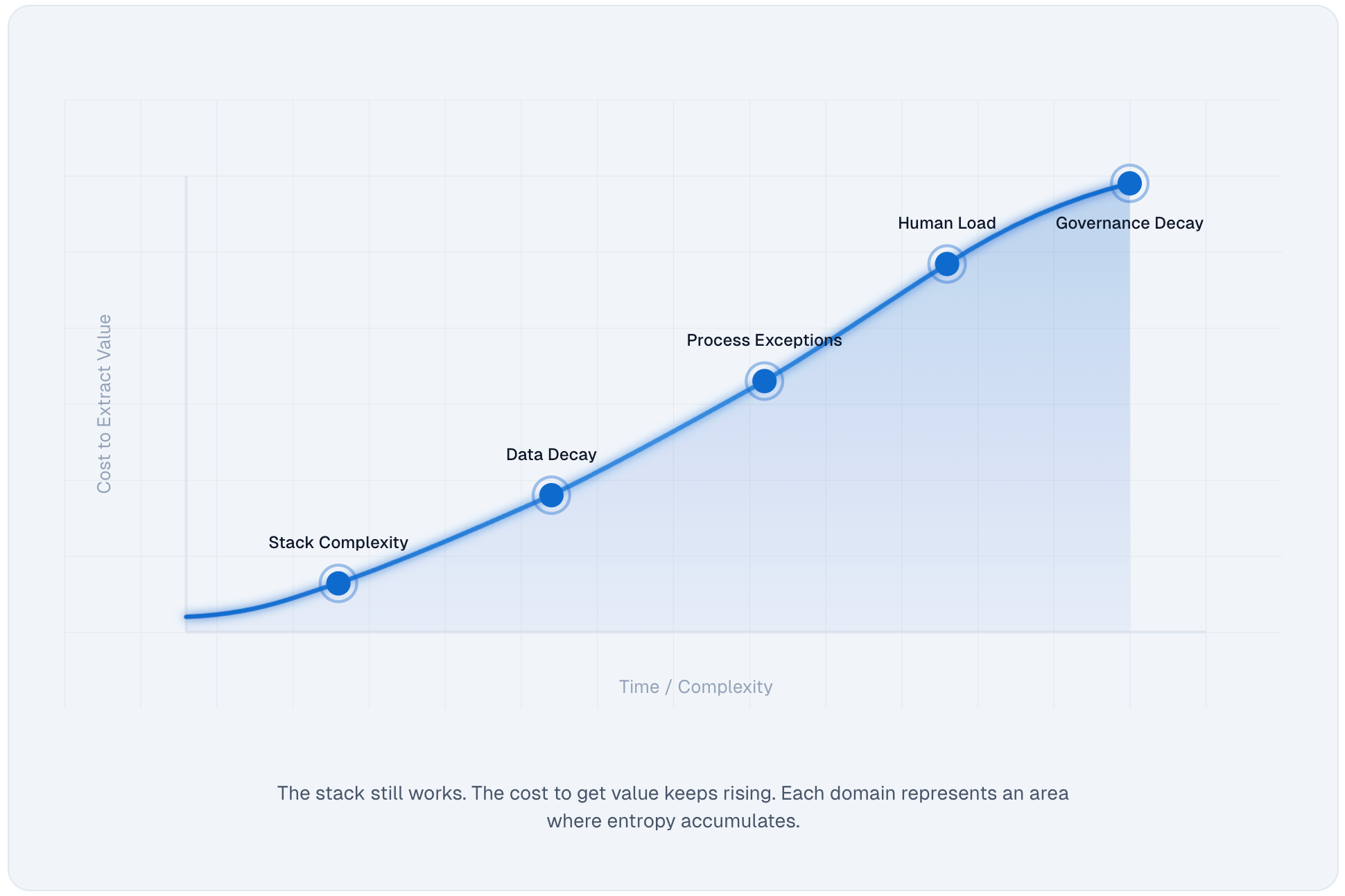

I've written before about what I called the Second Law of Martech, the idea that stacks don't collapse, they just ask for more. More meetings, more exceptions, more human energy spent on cognitive load, coordination overhead, and the quiet maintenance work of preventing drift from becoming visible failure. That rising cost is entropy in its most operational form. What I've come to see more clearly since writing that piece is that entropy is downstream of the readiness gap. The gap is what produces the condition in the first place. Organizations that move faster than they're ready to find that the energy required to hold everything together just keeps climbing, and at some point that energy crowds out the actual work.

I asked a version of this question in an earlier piece on the real Martech ROI equation:

How much energy are you spending holding the sandcastle together, and is it giving you the ROI you think it is?

At the time I framed it as a financial question. What I've come to understand since is that it's really a readiness question. The energy spent compensating for a gap that was never honestly assessed is the hidden line item that never appears in the business case but always shows up in the results.

The vendor ecosystem is not slowing down. If anything, AI has accelerated the rate at which new capabilities are being surfaced, marketed, and made to feel urgent. Every six months there's a new architecture to understand, a new category to evaluate, a new risk of being left behind. For organizations already struggling to operate what they have, that pressure doesn't produce better decisions. It produces faster ones. And faster decisions, made before the foundations are ready, is exactly how the gap grows.

What it actually looks like

The readiness gap rarely announces itself clearly. It tends to show up as a collection of symptoms that get misread as separate problems.

A CDP that costs more than it produces. A personalization program that never quite gets to personalization. A data team and a marketing team that both work hard and somehow always want different things. A transformation program that delivers a new platform but inherits all the old confusion. A stack that everyone agrees is too complex but nobody can agree on how to simplify. I am sure we can all share an example.

I walked into something close to this at a major UK broadcaster not long ago. They hadn't come looking for a new platform. They came because the current setup had hardened into something expensive to run, hard to change, and oddly unaccountable. A major architectural migration had already happened. On paper it should have simplified things. In practice it had introduced more interfaces and more ambiguity. Different teams had their own versions of the truth, their own diagrams, their own priorities. The technology wasn't the problem. The organization hadn't been ready to absorb the change it had already made.

I've seen a version of this across sectors, insurance, media, healthcare, financial services. The surface problems look different. The root cause is consistent and sector agnostic.

The uncomfortable part

If you've read my piece on why chasing shiny CDP features leaves marketers feeling like imposters, over on MarTech.org, you'll recognize what comes next.

There's a psychological dimension to this that the industry tends to sidestep. Teams under this kind of pressure develop a specific kind of insecurity. I wrote there about the sandcastle dynamic, how vendors keep raising the waterline with new features and AI capabilities while most practitioners are still doing the genuinely hard work of getting reliable data out the door. The cruel irony is that the basics aren't basic at all. But in a market that celebrates the bleeding edge, doing foundational work well feels like falling behind.

Read my full article on Martech.org

That insecurity distorts decision-making in a predictable way. Reactive buying, over-scoped projects, and a tendency to adopt the language of sophistication before the operating model can support it. AI-native, composable, real-time, agentic, these become identity claims rather than operational realities. And the gap between the claim and the reality is precisely where the money disappears.

I've also noticed, and this came up repeatedly in conversations while writing for MarTech.org last year, that most organizations spend more on unused SaaS features than on training the people managing them. They invest in tooling, integrations, orchestration layers, and AI add-ons, but when you ask about the budget for capability development the answer is usually silence. That imbalance is both a symptom of the readiness gap and one of the reasons it persists.

Read my full article on Martech.org

Where the work actually sits

Getting honest about the gap requires holding two things at once. The ambition is legitimate. Wanting to operate a modern, effective Martech stack is a reasonable thing to want. And the current operating model may not be ready to support it yet, which means the next expensive move is likely to produce the same result as the last one.

In my experience the gap tends to show up consistently across three dimensions: how an organization is structured to make and sustain Martech decisions, what it's genuinely capable of operating, and whether its processes are reliable enough to support the things it wants to do. Each of those deserves more than a sentence, so I'll get into them properly in the next piece. But taken together they tend to explain most of what looks like a technology problem from the outside.

If you've seen this challenge in your own organization, or if you're currently sitting inside one of those stalled programs wondering why the new platform didn't fix it, I'd genuinely like to hear about it. Drop a comment or find me on LinkedIn. This is where most of the real work in Martech still happens, and not enough of us are talking about it honestly.

Your stack isn’t failing.

It’s absorbing more effort than it used to.

The Second Law of Martech scan helps you see where organisational energy is being spent just to keep things working, and where that cost is starting to crowd out real value.

Discussion