Last week two things crossed my desk screen that, on the face of it, had nothing to do with each other.

The first was an email from an investor running an internal AI contest across six of their portfolio companies, one of which I used to work with. The framing was good, and I was happy to see this development. They were asking employees (and somehow former contractors like me) to record a two-minute screen capture of an AI workflow they'd started using. A 15-minute task done faster. A draft response written in half the time. You get the idea.

Three winning teams, a thousand euros each in travel vouchers, and an independent jury. That was the whole thing.

The second showed up on Reddit. An open letter from the UK Department for Science, Innovation and Technology and the Cabinet Office, signed by the Secretary of State and the Security Minister. Serious stuff, yet addressed, plainly, to "Dear business leaders." Not addressed to me, obviously, but worth reading anyway.

The open letter from the UK Department for Science, Innovation and Technology and the Cabinet Office, April 2026.

It said, among other things, that the UK's AI Security Institute had assessed that frontier model capabilities are now doubling every four months, compared to every eight months previously. Recent tests of advanced AI models, including Anthropic's new Mythos, indicate that AI cyber capabilities are advancing faster than previously envisaged. That criminals will not just target government systems and critical infrastructure. They will target ordinary companies, of every size, in every sector. Attackers go where defenses are weakest.

I read them the same morning. And I sat with them for a while.

For about 2 cups of coffee.

The healthy thing first

Look, the investor email is the healthy version of where most companies are, or really should be aiming for. AI inside organizations has, for a couple of years now, mostly arrived in one direction. Imported. Pitched to leadership. Bought. Then handed down, with some instructions and a rollout plan. Meanwhile the actual work, the 15-minute task, the email triage, the spreadsheet wrangling, was either being automated by individual employees on their own initiative, or not being touched at all because nobody had told them they were allowed.

Asking employees to surface what they're already doing is, genuinely, a smart move. It's a kind of organizational stethoscope.

Where is AI actually being used?

By whom?

For what?

You learn more from that than from any maturity assessment a vendor will sell you.

It also has the rare quality of being practical. There's no transformation theatre. No call to "reimagine the future of work". Just: show me what you did last Tuesday that worked.

I'm highly in favor of this. I think more investors and more leadership teams should run something like it. Learning to walk before you run is not a cliché in this case. It's literally what's happening. People are figuring out where AI fits in their day, one workflow at a time, and the smart organizations are paying attention.

And then the UK letter

The Cabinet Office letter is doing something different. It's not asking. It's warning.

What caught my eye, as I scanned it, was the specific framing.

"We are entering a period in which the pace of technological change may test every institution in the country."

Although it sounds like a rogue version of Martech's Law, we need to accept that it is not a sentence civil servants write lightly. The bit about frontier capabilities doubling every four months instead of every eight gets argued over before it ends up in a signed letter from two ministers.

And then the asks, which are deliberately unsexy. Take cybersecurity seriously at the top of your organization. Don't delegate it to IT and forget about it. Get the basics right with Cyber Essentials. Sign up for the Early Warning Service. Plan and rehearse how you'd respond to a significant incident.

This is governments, in writing, telling business leaders that the same AI capabilities employees are using to draft emails faster are, on a slightly different vector, being used by people trying to break into their networks. And that the velocity of that is changing faster than most boards have processed.

The iceberg

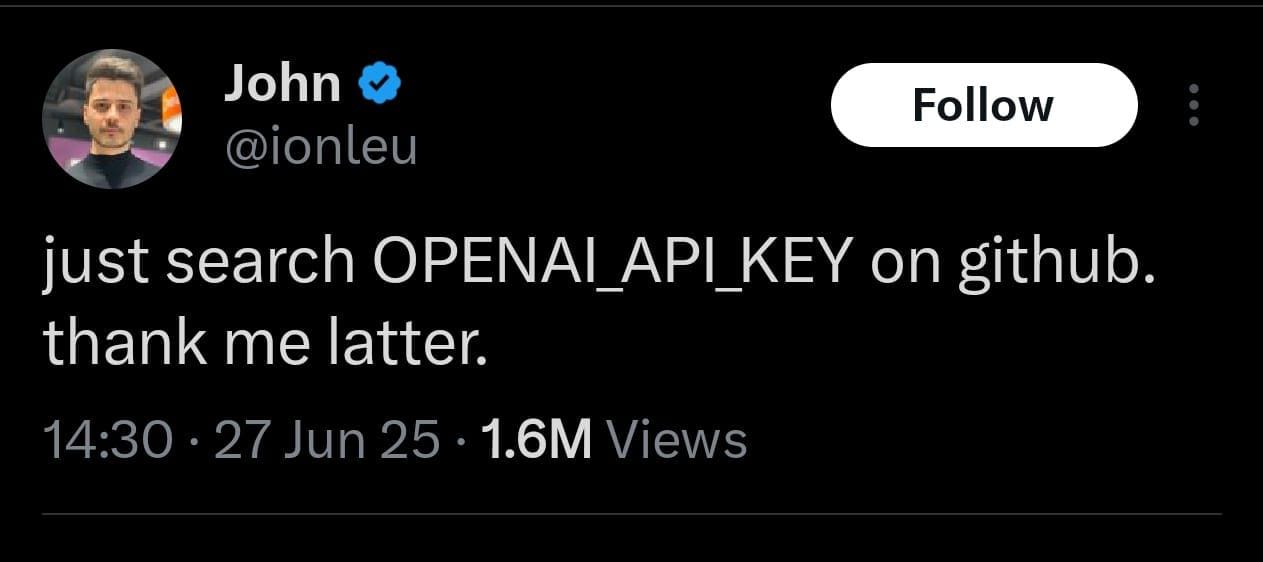

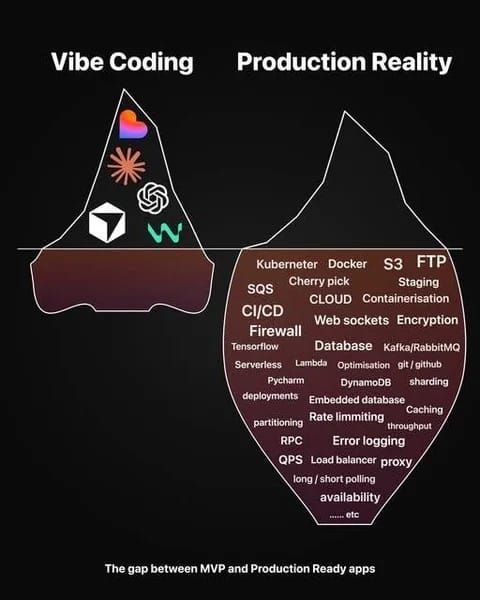

There's an image that's been doing the rounds that captures part of what I'm trying to say better than I can in prose.

The tip is what we see and celebrate. The Lovable logo, the ChatGPT logo, the Cursor logo, the few tools that make the AI workflow demo look effortless. The investor's contest submissions will mostly live up here. Someone showing a clever prompt, a useful agent, a workflow that saves an hour.

The mass below the waterline is everything the workflow can eventually depend on. Databases. Encryption. Load balancers. Rate limiting. Error logging. Web sockets. Caching. The boring infrastructural reality that decides whether a clever prompt, run a thousand times a day across a business, holds up or collapses.

I think most contests like the one I described will surface tip-of-iceberg work, because that's what employees can see and record in a two-minute screen capture. That's fine. That's the point. But the people watching the contest results need to remember what's underneath, because that's where the actual risk lives.

What the two emails are saying together

The two of them, read together, are describing the same underlying reality from opposite ends. I'm still working out how to articulate this cleanly, so bear with me.

At one end, employees are integrating AI into their workflows organically. Some of it is brilliant. Some of it is duplicating work that the company across the street has already automated. Some of it is running through personal accounts on personal devices with company data inside the prompts. Most of it is invisible to the people who'd need to know if something went wrong.

At the other end, the threat surface is getting wider and faster. Not just from external attackers. From the unmanaged sprawl of AI use inside the building, sitting on top of infrastructure that has its own ongoing supply of disclosed vulnerabilities to patch.

The contest model is a great way to find out what's happening. It is not, on its own, a way to govern what happens next. And the gap between those two things, between we asked people to share what they're doing and we have a coherent picture of how AI is being used across the company and what the risks are, is where most organisations will sit for the next year or two.

I don't say that to be gloomy. I say it because I think the contest is the right first move and the wrong last move. You learn what's there. Then you have to do something with what you learned.

What "doing something" actually looks like

This is the part where I'm tempted to give you a model and I'm going to resist, because I'm honestly not sure the model exists yet. And more honestly I am not a model kind of person. What I have are observations from inside the work.

Most companies I see don't lack AI ideas. They lack a way to triage them. The submissions a contest like this surfaces are going to range from "I use ChatGPT to summarise meeting notes" to "I built a workflow that pulls customer data from three systems, runs it through a model, and writes back into the CRM." Those two things need very different governance responses, and most leadership teams I've worked with don't yet have the language to tell them apart.

There's a piece in the Cabinet Office letter that I think applies directly. They distinguish between things you delegate to IT and things that belong at the top of the organisation. The same logic applies to AI inside companies. Drafting a response faster is not a board-level concern. Pulling customer data through a third-party model, deployed on infrastructure with a steady drip of high-severity CVEs, is. The contest will surface both, mixed together, and someone needs to do the sorting.

The other observation, and this one I hold less firmly, is that the operator-led approach probably can't be governed top-down without killing what made it valuable in the first place. The reason employees are surfacing useful workflows is that nobody told them they couldn't. The minute that becomes a compliance gauntlet, the surfacing stops, and the work goes back underground where you can't see it. So the governance has to be light enough to keep the visibility, but real enough to catch the things that matter. That's a hard balance and I don't think anyone has it figured out yet.

Where I land, for now

I think the investor running that contest is doing the right thing. I think the UK government writing that letter is doing the right thing. And I think most boards will read the contest results in July and the cyber letter in passing, and not connect them.

That's the gap I'd want to be in the room for. Not the contest itself, which is fine on its own terms. Although a paid holiday would be nice. But the conversation that comes after, when the submissions are in and someone has to look at the spread and ask what it means for how the company actually works, what's running where, and whether the iceberg under the demo is solid enough to hold the weight people are about to put on it.

If you're running something similar, or you're about to be, that's roughly the territory my Martech Foundry work is in. Once people start using AI tools in earnest, somebody has to hold the picture together. That's the part I work on. martechfoundry.com

Curious if anyone else is seeing this pattern. I know one email and one open letter in one week is a small sample. But it's a sample.

Do you have any questions after reading this article?

Or need support with your Martech projects?

Discussion